In our last installment, we introduced a solution for syncing large or already compressed files around without strictly commit’ing them to git proper when collaborating on a munki repo. This was by leveraging the git-fat add-on/script, which only relies on python(2.7) and rsync. Let’s talk about how, if you followed Hannes Juutilainen’s wiki page on setting up git to work with your munki repo, you’d migrate over to this newer workflow.

First, we need to setup git-fat, which starts by ‘installing’ it (putting it in your path). The original script isn’t as actively developed as one particular fork (which is the one you’d get if you installed it with pip), so we’re going to grab that one in the most simplistic way possible (as a user that’s allowed to run commands with sudo):

curl https://raw.githubusercontent.com/cyaninc/git-fat/master/git_fat/git_fat.py | sudo tee /usr/local/bin/git-fat && sudo chmod +x /usr/local/bin/git-fat

You’ll see the script you just curl’d and piped to sudo fly past while tee’d to stdout, and now it’s executable & in your path. <insert meme about testing code in production and piping internet scripts to bash here>

As a safety net for our changes, you could zip up the .git folder at the root of the repo on any admin workstations and the vhost, backup the repo on your configured remote(s), and commit all your work with a tag for good measure before we really start making changes. As Hannes said and I’ll take this chance to reiterate, version control is not backup.

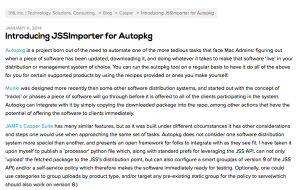

Two support files are needed as well, first the .gitfat file tells the script what location you’ll be rsync’ing to and from:

[rsync] remote = localhost:/tmp/fat-store

Just for demonstration purposes we’re using localhost, as the practice walkthrough here dictates, but as this is now a shared file in the repo that all admins would access, make it a server that admins are allowed access to and has enough space for just what you’re syncing. You’d also probably want to set up ssh key-based auth on the actual host you’d like to pull from when automating the web host serving to munki clients, perhaps with a shared or individual identity file referred to in ~/.ssh/config, as per http://stackoverflow.com/questions/5527068/how-do-you-use-an-identity-file-with-rsync

Next you’d add the .gitattributes file, which tells it the files you’d like git-fat to intercept when commit’ing, so only placeholder files are pulled into the index on this local you’ve made changes on. Optionally, if you feel you wouldn’t be messing about with icons or banner designs per department that often, maybe you’d leave .zip and .png out, but I’m trying to avoid potential future flubs by fellow admins with the stray ‘add .’

*.mpkg filter=fat -crlf *.pkg filter=fat -crlf *.png filter=fat -crlf *.dmg filter=fat -crlf *.zip filter=fat -crlf

You’re ready to commit those files as normal, so all admins can share them when they pull for updates. Then run git fat init to update the local .git/config file, and we’re all set to move on.

Now we’d remove any lines in .gitignore that match the file extensions we told the .gitattributes file about, and/or if you did any paths to the file types/extensions in question. If you’ve got a lot of packages, you may want to break it out a commit at a time and remove just the icons and client_resource zips first, maybe keeping /pkgs/apps ignored, as you’ll be inflating the local repo with a copy of each of those items. It took me 40 seconds for each add and commit step on a slower host with just the 1GB of Office/Lync pkgs, and 6 minutes EACH to add/commit/push CS6. Understand that this host will now also have an inflated .git/fat/objects folder, which still keeps things out of (regular) git’s internal accounting, but is significant duplication of those files we specified in .gitattributes. Then you’d run git fat push to rsync everything not yet pushed up to the ‘fat-store’.

One point of note, if you were using a GUI client like Sourcetree or the Github for Mac software (I didn’t test text editors which many folks use to interact with version control as well), it may not pick up on the changed .git/config, and therefore ignore git-fat. This only impacts workflow when adding ‘assets'(pkgs, icons, etc.), though, so anyone who just needs to make catalog/manifest/pkginfo modifications would be able to use all GUI clients without having to slip into the command line.

Once those files are un-ignored and rsync’d to the go-between server, you’re ready to push your commit’d changes to the remote. Afraid it’ll overwhelm the server with all the new directories? You’re in for a pleasant surprise – all the server sees are ~70KB placeholders with the same name as the previously ignored files. If you cat or echo the contents of one of the files to stdout, you’ll see a random hash git-fat uses in its internal accounting. As the git remote is a special kind of host, it’s not until you do a pull from another admin workstation that you see the rest of the process.

First, from that workstation collaborating on the repo with you, they’d pull the changes into either ‘master’ or the applicable branch. If they haven’t done so already, they need to install git-fat, as above. They’d cd to the directory they pull’d to and run git fat init, which updates their local version of the .git/config file, as above. Now the fun part: they can run git fat status, and see the random id’s of all of the files not yet rsync’d to the local copy of the repo, although it’s not necessary for them to run ‘git fat pull’ to sync any of it down to their workstation. Instead, they can work as normal, updating their local repo super-fast with MunkiAdmin and commit’ing their changes. The most important step comes next, which is they should run git fat push to rsync the fat files before (regular, just command line git) push’ing their changes to the remote in whatever branch they work on.

Now comes the final piece of the puzzle for me, as I specifically didn’t want the duplication mentioned above with how git-fat tracks a copy of whole files in its .git/fat/objects folder. On the web host for your munki server, install git-fat and, in addition to running git-fat init, we need to add a new git hook. (Another implementation detail: if you’re on a platform without python 2.7 you may want to at least install gcc and build python 2.7 with altinstall, which you can then update the shebang in /usr/local/bin/git-fat to point to instead of env. I personally didn’t want to bother with wrapping my head around throwing it in a virtualenv, but I’m sure that’s doable as well.)

Put the following in a file named post-merge in .git/hooks, and make sure it is executable for the user running git to pull updates:

#!/bin/bash ## uncomment the following line for debug output # set -x ## if on a RHEL system with selinux enabled, you may want to also chcon the synced file to allow http_ access for file in .git/fat/objects/*; do /bin/chmod 700 "$file" ; /bin/cp -v /dev/null "$file" ; done

This hook empties the placeholder files in .git/fat/objects by copying /dev/null into each, triggered by a (regular) git pull. You can of course comment out set -x to turn off its debug mode, and remove the -v from cp if you’d rather not see its progress. It’s a bit of a hack, but I’m lazy and it works. Follow that by immediately running git fat pull, or add that to the end of your hook if you want to lose the extra step. Git-fat will only update the .git/fat/objects folder with objects that haven’t fully sync’d yet, and if your rsync gets interrupted you should have another chance to sync things up before the post-merge script runs on another (regular) git pull. (Or at least the output of the previous git fat push should lead you to the placeholder file in question, which you can wipe to force a re-sync upon re-git fat pull’ing.)

I hope your team can get some value out of this (relatively) lightweight way of adding collaborators and making quick changes on your munki git repo with any local tool you’d like. Please test and gauge your teams comfort level with this solution before deploying! The nice thing about it is there is very little setup required, and you can test with a minimal repo of only a handful of file types to prove it out and demo it to coworkers before flipping the switch. Good luck!

Recent Comments